Food Is Medicine. But First It Has to Become Data.

Exploring how meal data can close the loop between behavior and biology

We hear the phrase “food is medicine” frequently now. It shows up in scientific papers, in public health discussions, and increasingly in conversations around longevity and preventive health. The phrase is compelling because it captures something that most people intuitively understand. What we eat influences nearly every biological system in the body. Diet affects metabolism, inflammation, energy levels, sleep quality, and long-term disease risk.

Yet there is an interesting contradiction hidden in the idea. Medicine relies on measurement, but food rarely does. Physicians do not prescribe drugs without understanding dosage, timing, and interactions. Clinical trials rely on carefully structured data about both inputs and outcomes. With food, however, we tend to operate in a far less structured way. Meals come and go throughout the day with very little record of what we ate, when we ate it, or how our bodies responded afterward.

If food truly functions as a form of medicine, then we should be able to observe its effects with the same level of attention that we apply to other biological interventions. That observation led me down an unexpected path that eventually resulted in an app I call Food Health AI.

The project did not begin as a product idea. It started as a small household experiment.

A FODMAP Experiment at Home

My wife Kirsten was experimenting with a FODMAP diet. For those unfamiliar with the term, FODMAP refers to a group of fermentable carbohydrates that can cause digestive discomfort in some people. The diet is often used as a diagnostic process to determine which foods may be triggering symptoms.

As part of that process she was using a device called FoodMarble. The device measures hydrogen and methane levels in breath samples after meals. These gases are produced during fermentation in the gut, so they provide clues about how certain foods are being processed by the digestive system.

The concept is clever and the device works reasonably well. The friction came from the companion app. Each meal had to be entered manually before breath readings could be interpreted properly. Over time the process of logging food began to take more effort than the actual measurement.

One morning I sat down and built a simple web-based logging tool just for her. It allowed her to quickly jot down what she had eaten without navigating the heavier interface in the FoodMarble application. The tool was not intended to go beyond our house. It was simply a quick solution to reduce the friction of tracking meals during the experiment.

For a while that small tool worked perfectly.

Then Kirsten had to travel.

Once she left the house, the little logging utility suddenly needed to be accessible remotely. Making that change was straightforward technically, but it shifted the way I thought about the tool. What had been a temporary household utility now behaved more like a lightweight application.

Not long after that, I began building an iPhone version.

A Missing Input in My Personal AI System

I have been building what I informally call AI/Steve, a personal AI system that aggregates many streams of information about my daily life. Over the past few years I have collected sleep data, activity metrics, observational notes, and results from small personal experiments. The system helps organize those signals and occasionally surfaces patterns that would be difficult to see otherwise.

Despite all of that data collection, something important was missing.

Food.

What we eat influences many of the signals we track in health analytics. Calories and macronutrients affect metabolic responses. Meal timing can influence sleep patterns. Certain foods appear to influence energy levels or recovery after exercise. Even mood and mental clarity sometimes seem to shift depending on dietary patterns.

Yet my AI system had almost no structured information about what I was eating each day. Without that input, the system was attempting to interpret outcomes without knowing one of the most important drivers.

The food logging tool I was building for Kirsten suddenly looked like the missing component in the loop.

Why Food Logging Usually Fails

Anyone who has tried to track food for more than a few weeks knows the primary obstacle.

Food logging is tedious.

Most nutrition apps require users to search large food databases, estimate portion sizes, and manually assemble meals from individual ingredients. Even people who begin enthusiastically often abandon the process once the novelty wears off.

The friction lies in the input step.

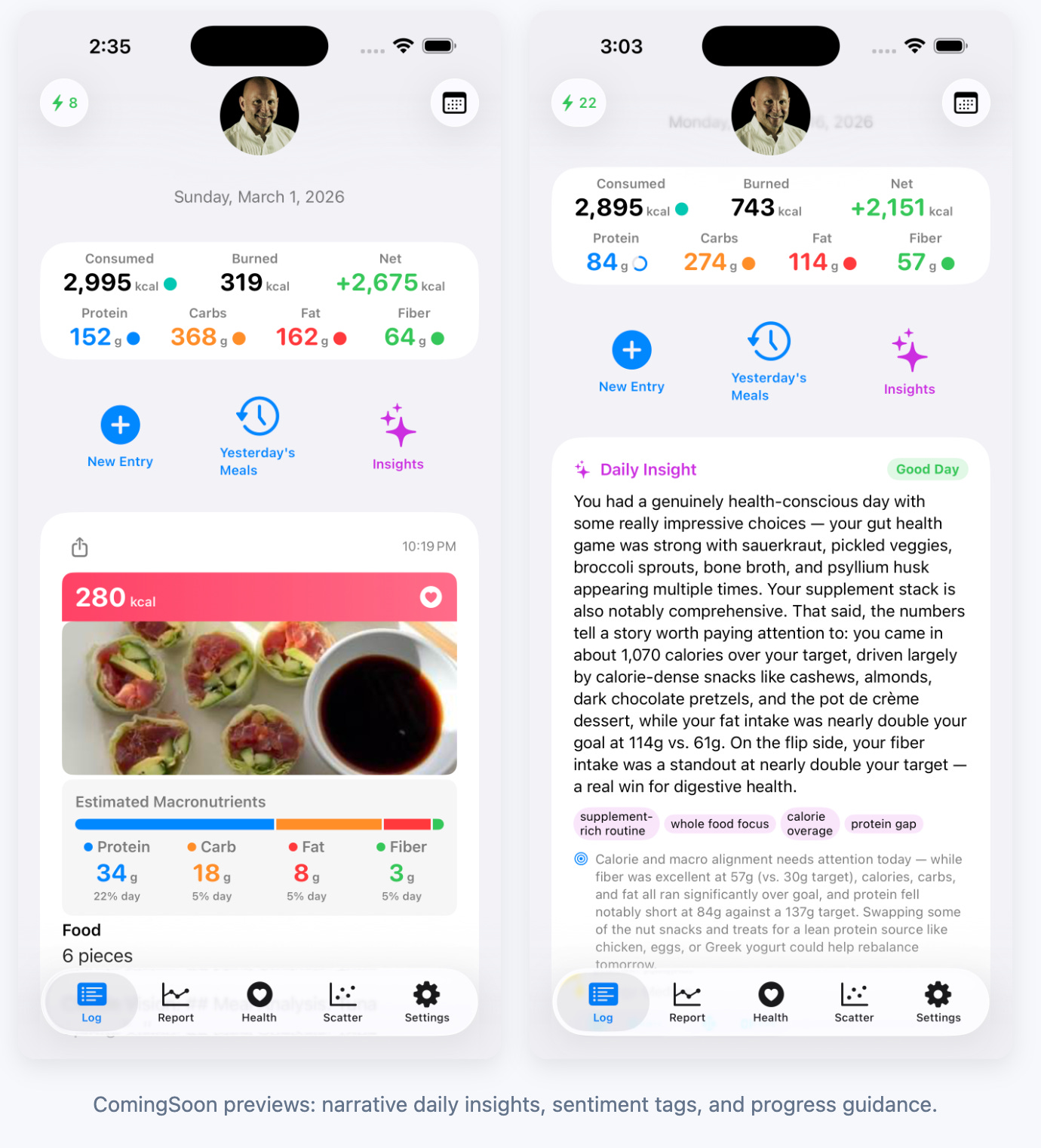

Recent advances in multimodal AI models offered an interesting alternative. Instead of typing out a detailed description of a meal, a user can simply take a photo. A vision model analyzes the image and produces a reasonable estimate of the foods present along with approximate calories and macronutrient breakdown.

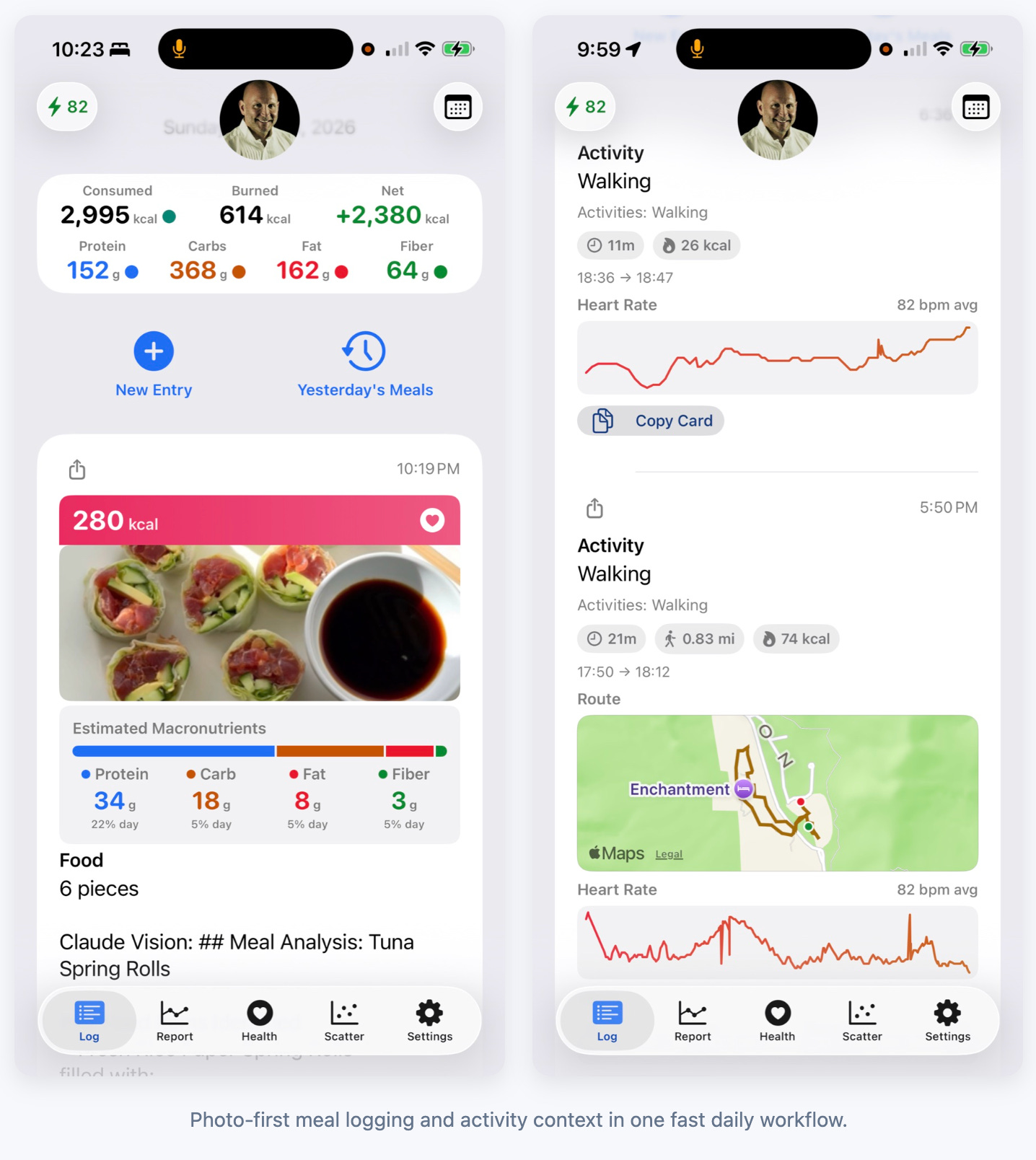

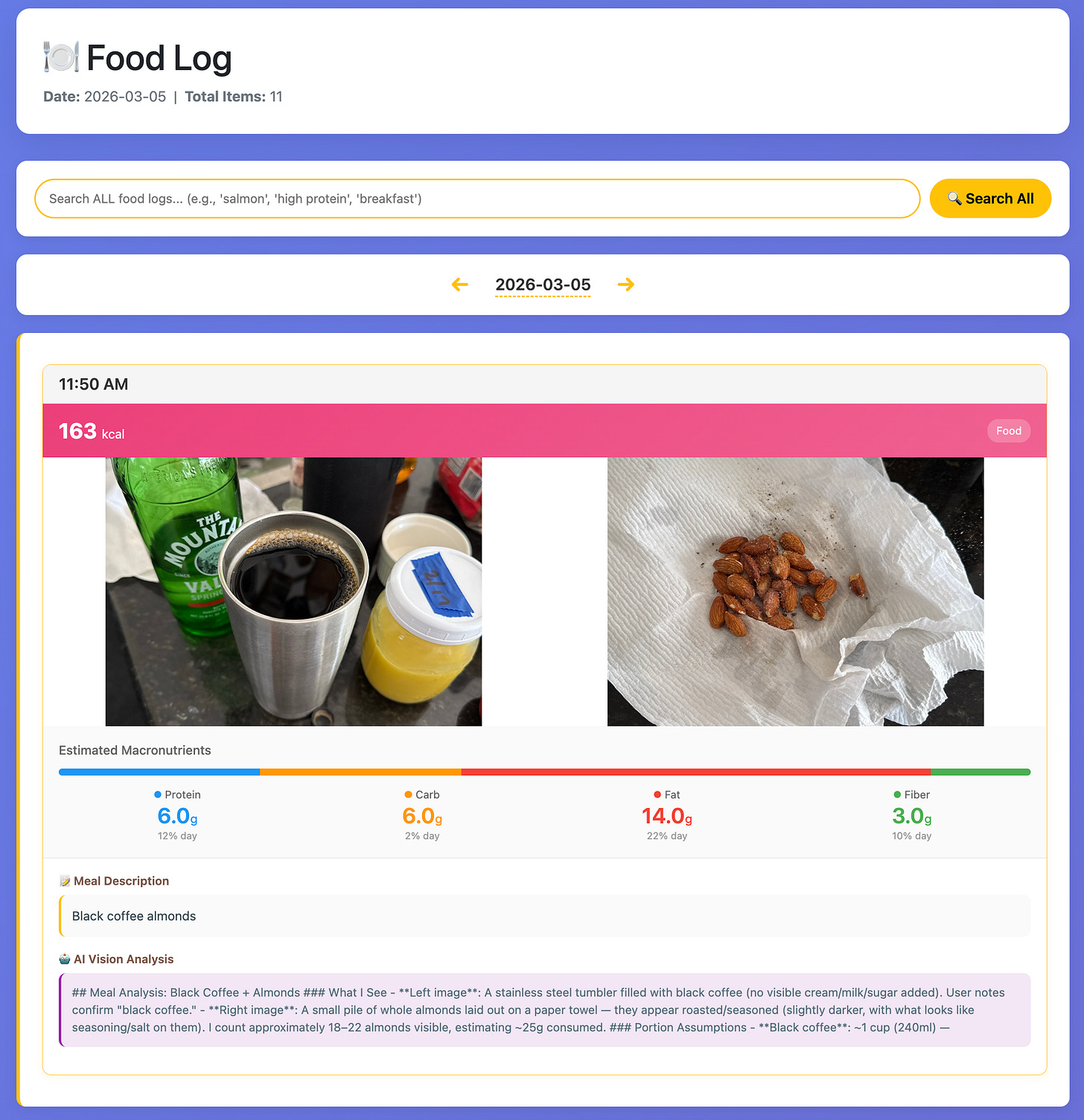

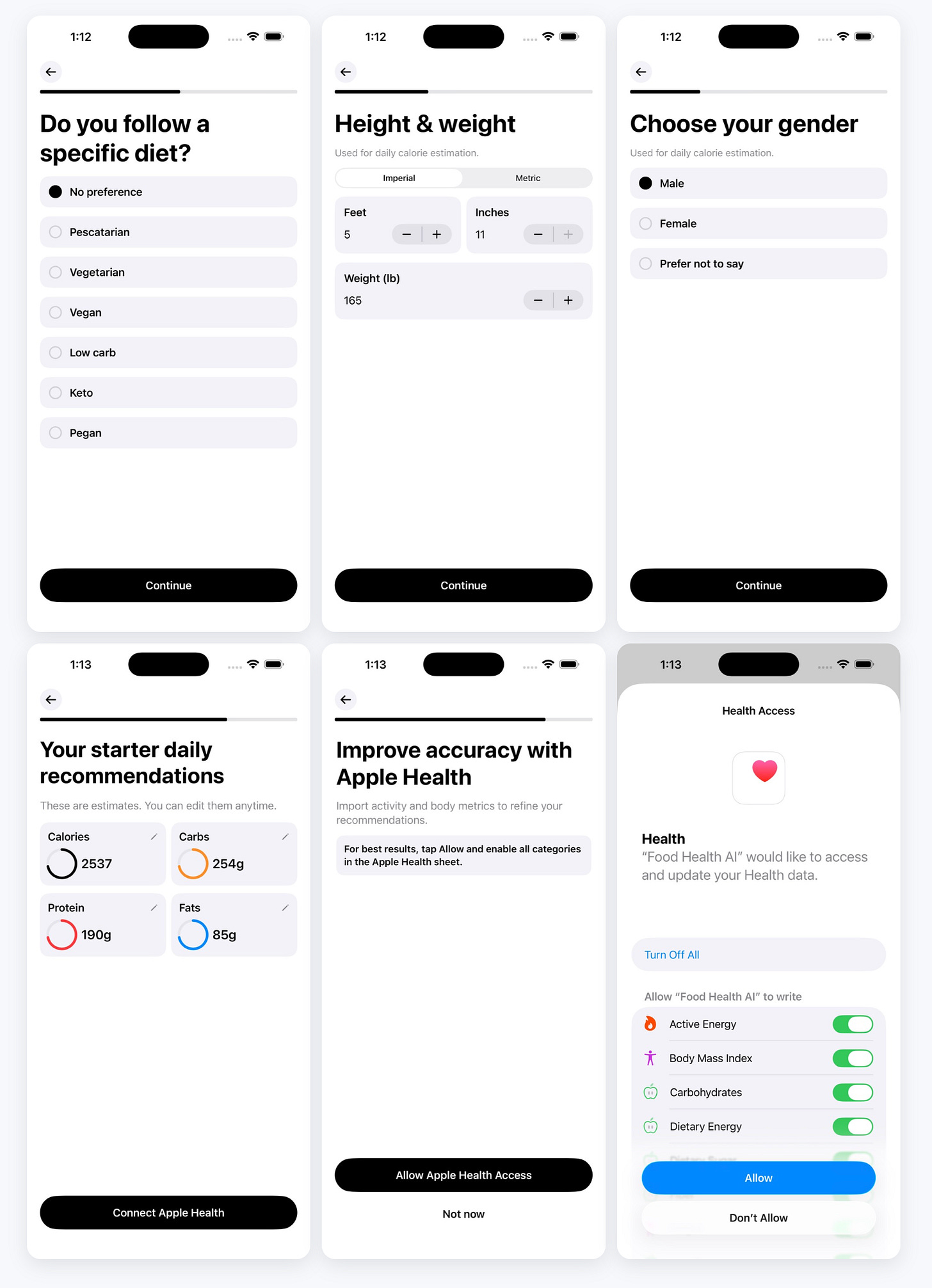

In the app I built, the workflow follows that general pattern. The user takes a photo of their meal and optionally adds a note describing anything the image might not capture clearly. The AI model analyzes the image and returns a narrative description along with estimated calories, protein, carbohydrates, and fats. A parser then extracts those numbers and converts them into structured data fields that can be stored and analyzed.

From the user’s perspective the process becomes remarkably simple. Logging a meal often takes only a few seconds.

Reducing friction turns out to be the most important design decision in food tracking.

Building the App as a Local-First System

Another design decision emerged early in development. The application would store its data locally first rather than relying entirely on remote services.

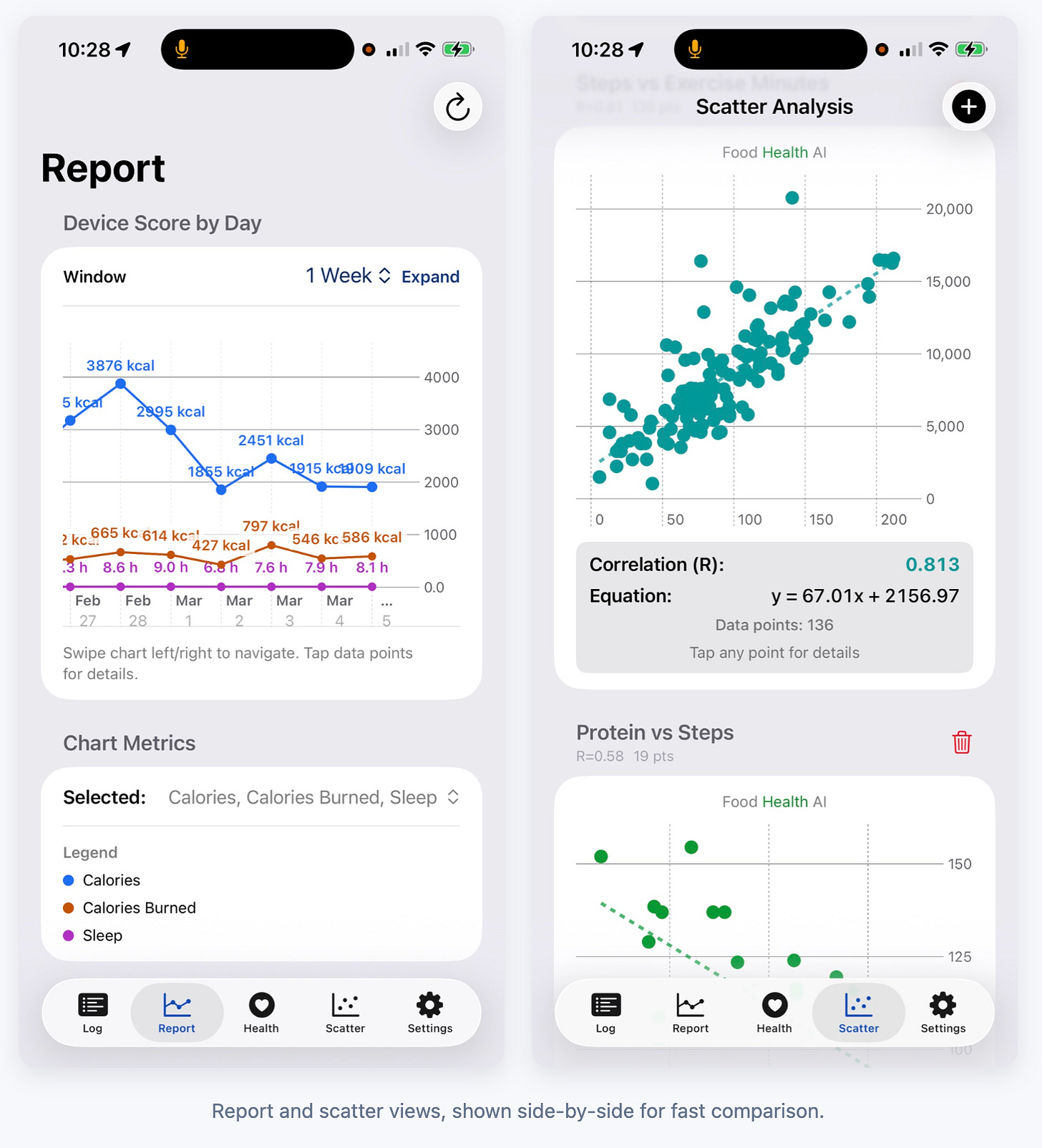

Each food entry is saved as a structured record along with its associated image. These records form a chronological log that can be used by the rest of the application. Reporting views, trend charts, and correlation analysis all draw from that same set of entries.

The advantage of this design is reliability. Users can continue logging meals even if network connectivity is poor. It also keeps the system transparent because the data used for analytics is exactly the same data the user originally recorded.

Over time the application began to feel less like a traditional calorie tracker and more like a personal nutrition observability tool. Instead of simply recording meals, the app makes it possible to look at patterns across days and weeks.

Connecting Meals to Health Signals

Once meals are captured in structured form, they can be connected to other data sources.

The app integrates with Apple Health so that activity metrics, calorie burn, and sleep data can be viewed alongside nutrition information. Seeing these signals together often reveals relationships that might otherwise go unnoticed.

For example, a user might notice that certain eating patterns correlate with deeper sleep, or that changes in macronutrient balance influence recovery after exercise. The scatter analysis feature in the app allows users to explore these pairwise relationships and examine how strongly two variables appear to move together.

The goal is not to draw definitive conclusions about physiology. Instead, it is to create a framework for personal observation and experimentation.

The Economics of AI Analysis

One practical constraint appears whenever AI models are used for image analysis.

Each request costs money.

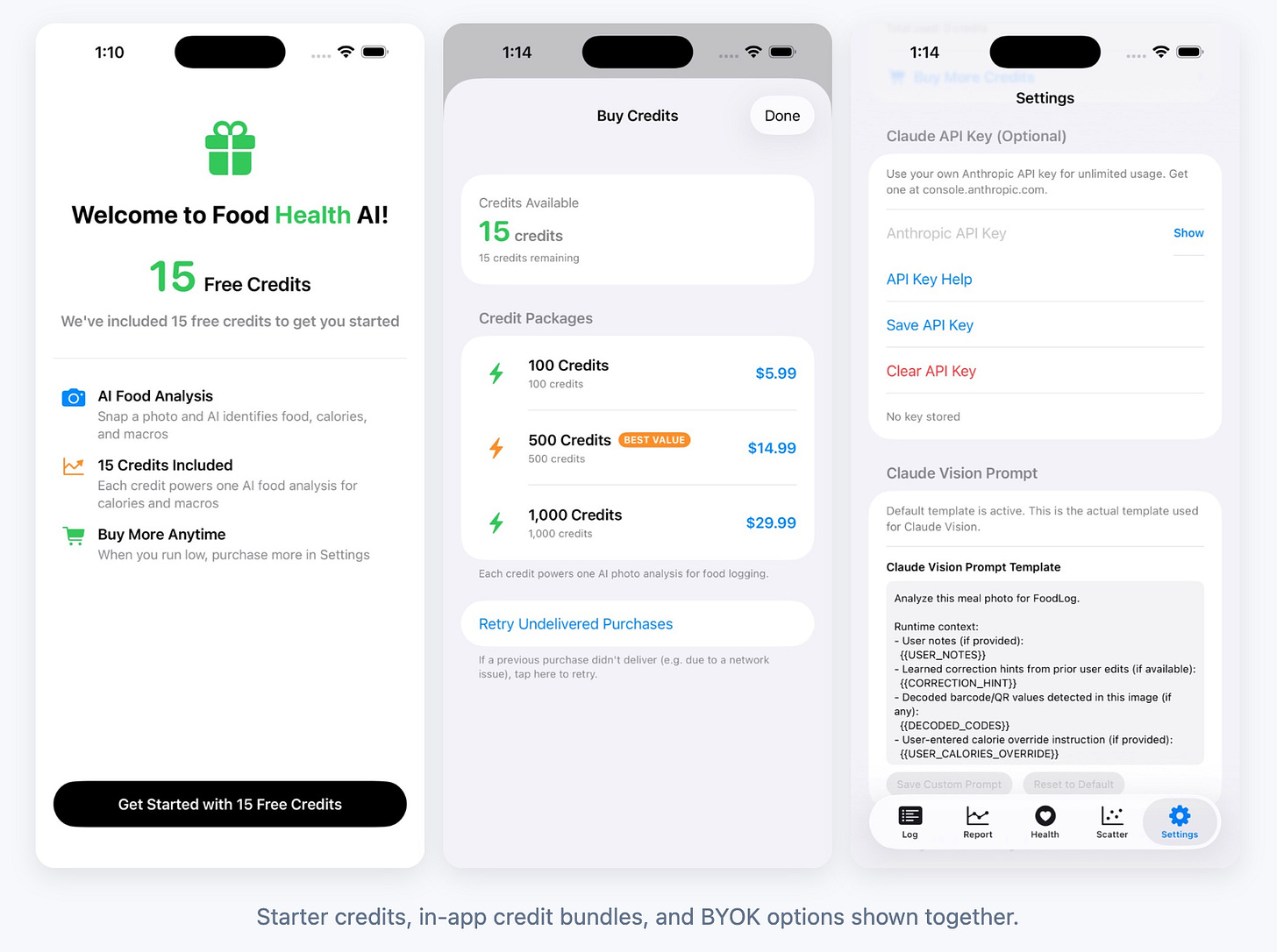

If an app allows unlimited image analysis for many users, the operational costs can grow quickly. The first version of the app addressed this by requiring users to bring their own API key. That approach meant the AI service was billed directly to the user’s account rather than the application itself.

In theory this was elegant. In practice it proved slightly inconvenient for some people who were not accustomed to managing API credentials.

The current version therefore supports two approaches. Users can still supply their own API key if they prefer, but they can also purchase small bundles of analysis credits through in-app purchases. This dual model allows the system to remain flexible without creating unnecessary barriers to entry.

An Unexpected Parallel

While finishing the app I learned about a product called Cal AI, which had been built by a group of high school students. Their application grew rapidly by introducing a social layer where users could share and compare meals within a network of friends.

Recently the company was acquired by MyFitnessPal.

What struck me about their approach was how different it was from the path I had taken. Their product emphasized social engagement and network effects. My version focused on connecting food data to a broader personal analytics system.

Both approaches explore the same underlying behavior from different angles.

Where This Might Lead

Food Health AI is still evolving. At the moment it functions primarily as a fast and flexible way to capture meals and convert them into structured nutritional data.

The more interesting possibilities appear when that data is combined with other signals. Sleep patterns, activity metrics, and metabolic indicators all interact with dietary choices in ways that are often subtle and highly individualized.

As wearable devices and health analytics continue to improve, tools like this may help individuals observe those relationships more clearly. Instead of relying solely on generalized dietary advice, people may begin to see how specific foods influence their own biology.

At that point the phrase “food is medicine” begins to acquire a more practical meaning.

Not just as an idea, but as something that can be measured.

ComingSoon

Given the sentiment-analysis work I have been doing with the RAG pipeline in my AI/Steve system, I built a lightweight version of that capability directly into Food Health AI.

The goal is practical: determine basic sentiment at daily, weekly, monthly, and all-time horizons in the backdrop of each user’s health and fitness goals, then translate that into progress reporting and next-step guidance.

Android Version became available April 2, 2026

Steven Muskal, Ph.D. is the CEO of Eidogen-Sertanty, Inc. — a drug discovery informatics company. He has spent four decades working at the intersection of computational biology, AI, and drug discovery. He writes about AI, health, and the intersection of biology and technology at stevenmuskal.com

For a mix clip - I’ve been so busy and generative on the computer front. Haven’t scheduled a recent mix, but we’ll get back to that. Here’s another favorite from my recent 59th B-day mix. Maya, Tammy, Rick, Dom, Alan and Grant crush-it on What’s Up.

Its an insightful evolution, bringing in food consumption metrics, perhaps obvious in retrospect, but tremendously important. Which leads to other questions about other impactful activities to include... working out, playing music, having sex...